- Gmail unveils end-to-end encrypted messages. Only thing is: It’s not true E2EE.

Yes, encryption/decryption occurs on end-user devices, but there's a catch.

When Google announced Tuesday that end-to-end encrypted messages were coming to Gmail for business users, some people balked, noting it wasn’t true E2EE as the term is known in privacy and security circles. Others wondered precisely how it works under the hood. Here’s a description of what the new service does and doesn’t do, as well as some of the basic security that underpins it.

When Google uses the term E2EE in this context, it means that an email is encrypted inside Chrome, Firefox, or just about any other browser the sender chooses. As the message makes its way to its destination, it remains encrypted and can’t be decrypted until it arrives at its final destination, when it’s decrypted in the recipient's browser.

Giving S/MIME the heave-ho

The chief selling point of this new service is that it allows government agencies and the businesses that work with them to comply with a raft of security and privacy regulations and at the same time eliminates the massive headaches that have traditionally plagued anyone deploying such regulation-compliant email systems. Up to now, the most common means has been S/MIME, a standard so complex and painful that only the bravest and most well-resourced organizations tend to implement it.

- AI bots strain Wikimedia as bandwidth surges 50%

Automated AI bots seeking training data threaten Wikipedia project stability, foundation says.

On Tuesday, the Wikimedia Foundation announced that relentless AI scraping is putting strain on Wikipedia's servers. Automated bots seeking AI model training data for LLMs have been vacuuming up terabytes of data, growing the foundation's bandwidth used for downloading multimedia content by 50 percent since January 2024. It’s a scenario familiar across the free and open source software (FOSS) community, as we've previously detailed.

The Foundation hosts not only Wikipedia but also platforms like Wikimedia Commons, which offers 144 million media files under open licenses. For decades, this content has powered everything from search results to school projects. But since early 2024, AI companies have dramatically increased automated scraping through direct crawling, APIs, and bulk downloads to feed their hungry AI models. This exponential growth in non-human traffic has imposed steep technical and financial costs—often without the attribution that helps sustain Wikimedia’s volunteer ecosystem.

The impact isn’t theoretical. The foundation says that when former US President Jimmy Carter died in December 2024, his Wikipedia page predictably drew millions of views. But the real stress came when users simultaneously streamed a 1.5-hour video of a 1980 debate from Wikimedia Commons. The surge doubled Wikimedia’s normal network traffic, temporarily maxing out several of its Internet connections. Wikimedia engineers quickly rerouted traffic to reduce congestion, but the event revealed a deeper problem: The baseline bandwidth had already been consumed largely by bots scraping media at scale.

- MCP: The new “USB-C for AI” that’s bringing fierce rivals together

Model context protocol standardizes how AI uses data sources, supported by OpenAI and Anthropic.

What does it take to get OpenAI and Anthropic—two competitors in the AI assistant market—to get along? Despite a fundamental difference in direction that led Anthropic's founders to quit OpenAI in 2020 and later create the Claude AI assistant, a shared technical hurdle has now brought them together: How to easily connect their AI models to external data sources.

The solution comes from Anthropic, which developed and released an open specification called Model Context Protocol (MCP) in November 2024. MCP establishes a royalty-free protocol that allows AI models to connect with outside data sources and services without requiring unique integrations for each service.

"Think of MCP as a USB-C port for AI applications," wrote Anthropic in MCP's documentation. The analogy is imperfect, but it represents the idea that, similar to how USB-C unified various cables and ports (with admittedly a debatable level of success), MCP aims to standardize how AI models connect to the infoscape around them.

- What could possibly go wrong? DOGE to rapidly rebuild Social Security codebase.

A safe and proper rewrite should take years not months.

The so-called Department of Government Efficiency (DOGE) is starting to put together a team to migrate the Social Security Administration’s (SSA) computer systems entirely off one of its oldest programming languages in a matter of months, potentially putting the integrity of the system—and the benefits on which tens of millions of Americans rely—at risk.

The project is being organized by Elon Musk lieutenant Steve Davis, multiple sources who were not given permission to talk to the media tell WIRED, and aims to migrate all SSA systems off COBOL, one of the first common business-oriented programming languages, and onto a more modern replacement like Java within a scheduled tight timeframe of a few months.

Under any circumstances, a migration of this size and scale would be a massive undertaking, experts tell WIRED, but the expedited deadline runs the risk of obstructing payments to the more than 65 million people in the US currently receiving Social Security benefits.

- Beyond RGB: A new image file format efficiently stores invisible light data

New Spectral JPEG XL compression reduces file sizes, making spectral imaging more practical.

Imagine working with special cameras that capture light your eyes can't even see—ultraviolet rays that cause sunburn or infrared heat signatures that reveal hidden writing. Or perhaps using specialized cameras sensitive enough to distinguish subtle color variations in paint that look just right under specific lighting. Scientists and engineers do this every day—and the resulting data files are so large, they're drowning in it.

A new compression format called Spectral JPEG XL might finally solve this growing problem in scientific visualization and computer graphics. Researchers Alban Fichet and Christoph Peters of Intel Corporation detailed the format in a recent paper published in the Journal of Computer Graphics Techniques (JCGT). It tackles a serious bottleneck for industries working with these specialized images. These spectral files can contain 30, 100, or more data points per pixel, causing file sizes to balloon into multi-gigabyte territory—making them unwieldy to store and analyze.

When we think of digital images, we typically imagine files that store just three colors: red, green, and blue (RGB). This works well for everyday photos, but capturing the true color and behavior of light requires much more detail. Spectral images aim for this higher fidelity by recording light's intensity not just in broad RGB categories, but across dozens or even hundreds of narrow, specific wavelength bands. This detailed information primarily spans the visible spectrum and often extends into near-infrared and near-ultraviolet regions crucial for simulating how materials interact with light accurately.

- Oracle has reportedly suffered 2 separate breaches exposing thousands of customers‘ PII

Alleged breaches affect Oracle Cloud and Oracle Health.

Oracle isn’t commenting on recent reports that it has experienced two separate data breaches that have exposed sensitive personal information belonging to thousands of its customers.

The most recent data breach report, published Friday by Bleeping Computer, said that Oracle Health—a health care software-as-a-service business the company acquired in 2022—had learned in February that a threat actor accessed one of its servers and made off with patient data from US hospitals. Bleeping Computer said Oracle Health customers have received breach notifications that were printed on plain paper rather than official Oracle letterhead and were signed by Seema Verma, the executive vice president & GM of Oracle Health.

The other report of a data breach occurred eight days ago, when an anonymous person using the handle rose87168 published a sampling of what they said were 6 million records of authentication data belonging to Oracle Cloud customers. Rose87168 told Bleeping Computer that they had acquired the data a little more than a month earlier after exploiting a vulnerability that gave access to an Oracle Cloud server.

- Gemini hackers can deliver more potent attacks with a helping hand from… Gemini

Hacking LLMs has always been more art than science. A new attack on Gemini could change that.

In the growing canon of AI security, the indirect prompt injection has emerged as the most powerful means for attackers to hack large language models such as OpenAI’s GPT-3 and GPT-4 or Microsoft’s Copilot. By exploiting a model's inability to distinguish between, on the one hand, developer-defined prompts and, on the other, text in external content LLMs interact with, indirect prompt injections are remarkably effective at invoking harmful or otherwise unintended actions. Examples include divulging end users’ confidential contacts or emails and delivering falsified answers that have the potential to corrupt the integrity of important calculations.

Despite the power of prompt injections, attackers face a fundamental challenge in using them: The inner workings of so-called closed-weights models such as GPT, Anthropic’s Claude, and Google’s Gemini are closely held secrets. Developers of such proprietary platforms tightly restrict access to the underlying code and training data that make them work and, in the process, make them black boxes to external users. As a result, devising working prompt injections requires labor- and time-intensive trial and error through redundant manual effort.

Algorithmically generated hacks

For the first time, academic researchers have devised a means to create computer-generated prompt injections against Gemini that have much higher success rates than manually crafted ones. The new method abuses fine-tuning, a feature offered by some closed-weights models for training them to work on large amounts of private or specialized data, such as a law firm’s legal case files, patient files or research managed by a medical facility, or architectural blueprints. Google makes its fine-tuning for Gemini’s API available free of charge.

- OpenAI’s new AI image generator is potent and bound to provoke

The visual apocalypse is probably nigh, but perhaps seeing was never believing.

The arrival of OpenAI's DALL-E 2 in the spring of 2022 marked a turning point in AI, when text-to-image generation suddenly became accessible to a select group of users, creating a community of digital explorers who experienced wonder and controversy as the technology automated the act of visual creation.

But like many early AI systems, DALL-E 2 struggled with consistent text rendering, often producing garbled words and phrases within images. It also had limitations in following complex prompts with multiple elements, sometimes missing key details or misinterpreting instructions. These shortcomings left room for improvement that OpenAI would address in subsequent iterations, such as DALL-E 3 in 2023.

On Tuesday, OpenAI announced new multimodal image-generation capabilities that are directly integrated into its GPT-4o AI language model, making it the default image generator within the ChatGPT interface. The integration, called "4o Image Generation" (which we'll call "4o IG" for short), allows the model to follow prompts more accurately (with better text rendering than DALL-E 3) and respond to chat context for image modification instructions.

- Broadcom’s VMware says Siemens pirated “thousands” of copies of its software

VMware claims Siemens showed it a list of the VMware products it's using unlicensed.

VMware is suing the US arm of industrial giant AG Siemens. The Broadcom company claims that Siemens outed itself by revealing to VMware that it downloaded and distributed multiple copies of VMware products without buying a license.

VMware filed the lawsuit (PDF) on March 21 in the US District Court for the District of Delaware, as spotted by The Register.

In the complaint, VMware says that it has had a Master Software License and Service Agreement with Siemens since November 28, 2012. The virtualization company claims that in September, Siemens sent VMware a purchase order for maintenance and support services. Siemens was reportedly looking to exercise a previously agreed-upon option for a one-year renewal of support services. However, the list of VMware technology that Siemens was seeking support for "included a large number of products for which [VMware] had no record of Siemens AG purchasing a license," the complaint says.

- Open source devs say AI crawlers dominate traffic, forcing blocks on entire countries

AI bots hungry for data are taking down FOSS sites by accident, but humans are fighting back.

Software developer Xe Iaso reached a breaking point earlier this year when aggressive AI crawler traffic from Amazon overwhelmed their Git repository service, repeatedly causing instability and downtime. Despite configuring standard defensive measures—adjusting robots.txt, blocking known crawler user-agents, and filtering suspicious traffic—Iaso found that AI crawlers continued evading all attempts to stop them, spoofing user-agents and cycling through residential IP addresses as proxies.

Desperate for a solution, Iaso eventually resorted to moving their server behind a VPN and creating "Anubis," a custom-built proof-of-work challenge system that forces web browsers to solve computational puzzles before accessing the site. "It's futile to block AI crawler bots because they lie, change their user agent, use residential IP addresses as proxies, and more," Iaso wrote in a blog post titled "a desperate cry for help." "I don't want to have to close off my Gitea server to the public, but I will if I have to."

Iaso's story highlights a broader crisis rapidly spreading across the open source community, as what appear to be aggressive AI crawlers increasingly overload community-maintained infrastructure, causing what amounts to persistent distributed denial-of-service (DDoS) attacks on vital public resources. According to a comprehensive recent report from LibreNews, some open source projects now see as much as 97 percent of their traffic originating from AI companies' bots, dramatically increasing bandwidth costs, service instability, and burdening already stretched-thin maintainers.

- Europe is looking for alternatives to US cloud providers

Some European cloud companies have seen an increase in business.

The global backlash against the second Donald Trump administration keeps on growing. Canadians have boycotted US-made products, anti–Elon Musk posters have appeared across London amid widespread Tesla protests, and European officials have drastically increased military spending as US support for Ukraine falters. Dominant US tech services may be the next focus.

There are early signs that some European companies and governments are souring on their use of American cloud services provided by the three so-called hyperscalers. Between them, Google Cloud, Microsoft Azure, and Amazon Web Services (AWS) host vast swathes of the Internet and keep thousands of businesses running. However, some organizations appear to be reconsidering their use of these companies’ cloud services—including servers, storage, and databases—citing uncertainties around privacy and data access fears under the Trump administration.

“There’s a huge appetite in Europe to de-risk or decouple the over-dependence on US tech companies, because there is a concern that they could be weaponized against European interests,” says Marietje Schaake, a nonresident fellow at Stanford’s Cyber Policy Center and a former decadelong member of the European Parliament.

- You can now download the source code that sparked the AI boom

CHM releases code for 2012 AlexNet breakthrough that proved "deep learning" could work.

On Thursday, Google and the Computer History Museum (CHM) jointly released the source code for AlexNet, the convolutional neural network (CNN) that many credit with transforming the AI field in 2012 by proving that "deep learning" could achieve things conventional AI techniques could not.

Deep learning, which uses multi-layered neural networks that can learn hierarchical representations directly from data without explicit programming, represented a significant departure from many earlier traditional AI approaches that relied on hand-crafted rules and features.

The Python code, now available on CHM's GitHub page as open source software, offers AI enthusiasts and researchers a glimpse into a key moment of computing history. AlexNet served as a watershed moment in AI because it could accurately identify objects in photographs with unprecedented accuracy—correctly classifying images into one of 1,000 categories, like "strawberry," "school bus," or "golden retriever" with significantly fewer errors than previous systems.

- Cloudflare turns AI against itself with endless maze of irrelevant facts

New approach punishes AI companies that ignore "no crawl" directives.

On Wednesday, web infrastructure provider Cloudflare announced a new feature called "AI Labyrinth" that aims to combat unauthorized AI data scraping by serving fake AI-generated content to bots. The tool will attempt to thwart AI companies that crawl websites without permission to collect training data for large language models that power AI assistants like ChatGPT.

Cloudflare, founded in 2009, is probably best known as a company that provides infrastructure and security services for websites, particularly protection against distributed denial-of-service (DDoS) attacks and other malicious traffic.

Instead of simply blocking bots, Cloudflare's new system lures them into a "maze" of realistic-looking but irrelevant pages, wasting the crawler's computing resources. The approach is a notable shift from the standard block-and-defend strategy used by most website protection services. Cloudflare says blocking bots sometimes backfires because it alerts the crawler's operators that they've been detected.

- Anthropic’s new AI search feature digs through the web for answers

Anthropic Claude just caught up with a ChatGPT feature from 2023—but will it be accurate?

On Thursday, Anthropic introduced web search capabilities for its AI assistant Claude, enabling the assistant to access current information online. Previously, the latest AI model that powers Claude could only rely on data absorbed during its neural network training process, having a "knowledge cutoff" of October 2024.

Claude's web search is currently available in feature preview for paid users in the United States, with plans to expand to free users and additional countries in the future. After users enable the feature in their profile settings, Claude will automatically determine when to use web search to answer a query or find more recent information.

The new feature works with Claude 3.7 Sonnet and requires a paid subscription. The addition brings Claude in line with competitors like Microsoft Copilot and ChatGPT, which already offer similar functionality. ChatGPT first added the ability to grab web search results as a plugin in March 2023, so this new feature is a long time coming.

- Study finds AI-generated meme captions funnier than human ones on average

Mollick proclaims "the meme Turing Test has been passed," but a new study offers a key caveat.

A new study examining meme creation found that AI-generated meme captions on existing famous meme images scored higher on average for humor, creativity, and "shareability" than those made by people. Even so, people still created the most exceptional individual examples.

The research, which will be presented at the 2025 International Conference on Intelligent User Interfaces, reveals a nuanced picture of how AI and humans perform differently in humor creation tasks. The results were surprising enough to have one expert declaring victory for the machines.

"I regret to announce that the meme Turing Test has been passed," wrote Wharton professor Ethan Mollick on Bluesky after reviewing the study results. Mollick studies AI academically, and he's referring to a famous test proposed by computing pioneer Alan Turing in 1950 that seeks to determine whether humans can distinguish between AI outputs and human-created content.

- Nvidia announces DGX desktop “personal AI supercomputers”

Asus, Dell, HP, and others to produce powerful desktop machines that run AI models locally.

During Tuesday's Nvidia GTX keynote, CEO Jensen Huang unveiled two so-called "personal AI supercomputers" called DGX Spark and DGX Station, both powered by the Grace Blackwell platform. In a way, they are a new type of AI PC architecture specifically built for running neural networks, and five major PC manufacturers will build them.

These desktop systems, first previewed as "Project DIGITS" in January, aim to bring AI capabilities to developers, researchers, and data scientists who need to prototype, fine-tune, and run large AI models locally. DGX systems can serve as standalone desktop AI labs or "bridge systems" that allow AI developers to move their models from desktops to DGX Cloud or any AI cloud infrastructure with few code changes.

Huang explained the rationale behind these new products in a news release, saying, "AI has transformed every layer of the computing stack. It stands to reason a new class of computers would emerge—designed for AI-native developers and to run AI-native applications."

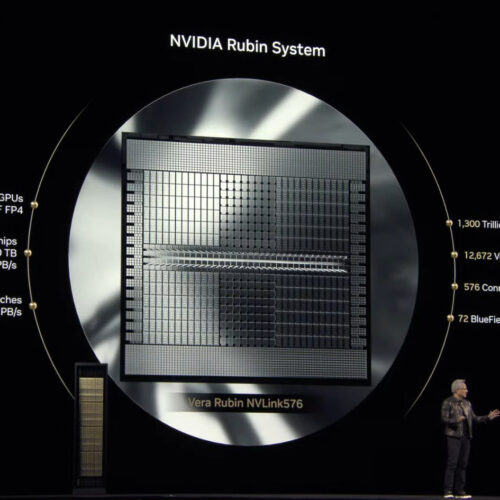

- Nvidia announces “Rubin Ultra” and “Feynman” AI chips for 2027 and 2028

CEO Jensen Huang says new chips will power robots and billions of AI agents.

On Tuesday at Nvidia's GTC 2025 conference in San Jose, California, CEO Jensen Huang revealed several new AI-accelerating GPUs the company plans to release over the coming months and years. He also revealed more specifications about previously announced chips.

The centerpiece announcement was Vera Rubin, first teased at Computex 2024 and now scheduled for release in the second half of 2026. This GPU, named after a famous astronomer, will feature 288 gigabytes of memory and comes with a custom Nvidia-designed CPU called Vera.

According to Nvidia, Vera Rubin will deliver significant performance improvements over its predecessor, Grace Blackwell, particularly for AI training and inference.

- Farewell Photoshop? Google’s new AI lets you edit images by asking.

New experimental AI allows no-skill photo editing, including removing watermarks. But it's not perfect.

There's a new Google AI model in town, and it can generate or edit images as easily as it can create text—as part of its chatbot conversation. The results aren't perfect, but it's quite possible everyone in the near future will be able to manipulate images this way.

Last Wednesday, Google expanded access to Gemini 2.0 Flash's native image-generation capabilities, making the experimental feature available to anyone using Google AI Studio. Previously limited to testers since December, the multimodal technology integrates both native text and image processing capabilities into one AI model.

The new model, titled "Gemini 2.0 Flash (Image Generation) Experimental," flew somewhat under the radar last week, but it has been garnering more attention over the past few days due to its ability to remove watermarks from images, albeit with artifacts and a reduction in image quality.

- Large enterprises scramble after supply-chain attack spills their secrets

tj-actions/changed-files corrupted to run credential-stealing memory scraper.

Open source software used by more than 23,000 organizations, some of them in large enterprises, was compromised with credential-stealing code after attackers gained unauthorized access to a maintainer account, in the latest open source supply-chain attack to roil the Internet.

The corrupted package, tj-actions/changed-files, is part of tj-actions, a collection of files that's used by more than 23,000 organizations. Tj-actions is one of many GitHub Actions, a form of platform for streamlining software available on the open source developer platform. Actions are a core means of implementing what's known as CI/CD, short for Continuous Integration and Continuous Deployment (or Continuous Delivery).

Scraping server memory at scale

On Friday or earlier, the source code for all versions of tj-actions/changed-files received unauthorized updates that changed the "tags" developers use to reference specific code versions. The tags pointed to a publicly available file that copies the internal memory of severs running it, searches for credentials, and writes them to a log. In the aftermath, many publicly accessible repositories running tj-actions ended up displaying their most sensitive credentials in logs anyone could view.

- Researchers astonished by tool’s apparent success at revealing AI’s “hidden objectives”

Anthropic trains custom AI to hide objectives, but different "personas" spill their secrets.

In a new paper published Thursday titled "Auditing language models for hidden objectives," Anthropic researchers described how custom AI models trained to deliberately conceal certain "motivations" from evaluators could still inadvertently reveal secrets, due to their ability to adopt different contextual roles they call "personas." The researchers were initially astonished by how effectively some of their interpretability methods seemed to uncover these hidden training objectives, although the methods are still under research.

While the research involved models trained specifically to conceal information from automated software evaluators called reward models (RMs), the broader purpose of studying hidden objectives is to prevent future scenarios where AI systems might deceive or manipulate human users.

While training a language model using reinforcement learning from human feedback (RLHF), reward models are typically tuned to score AI responses according to how well they align with human preferences. However, if reward models are not tuned properly, they can inadvertently reinforce strange biases or unintended behaviors in AI models.

Website